Your Scaling Problem Might Be Architecture, Not Kubernetes

Published: March 4, 2026

What if your scaling problem has nothing to do with infrastructure?

What if the real problem is architecture?

A company once reached out for help with scaling. They already had a recommended solution from a consulting partner: move to Kubernetes. It sounded modern, safe, and serious.

There was only one issue. They did not need it yet.

The situation

The company collected telemetry from physical devices. Devices sent data to a backend, the backend processed and stored it, then exposed an API.

At first glance this looked like a textbook "we will need distributed infrastructure soon" case. But one detail changed everything: device production was still slow. So slow that the full production workload was running on one large EC2 instance.

CPU had headroom. Memory had headroom. Disk had headroom. There were no recurring outages and no obvious reliability incidents.

What existed was mostly pressure and anticipation. Scale is coming, therefore we must prepare now, therefore Kubernetes.

The assumption nobody validated

Nobody asked the most important engineering question: what will break first?

Will CPU saturate? Will memory be exhausted? Will the database become the bottleneck? Will network throughput spike?

There were no convincing load-test results. No evidence-based capacity model. Just a chain of assumptions:

More devices -> more load -> Kubernetes.

That is not architecture. That is a guess wearing technical language.

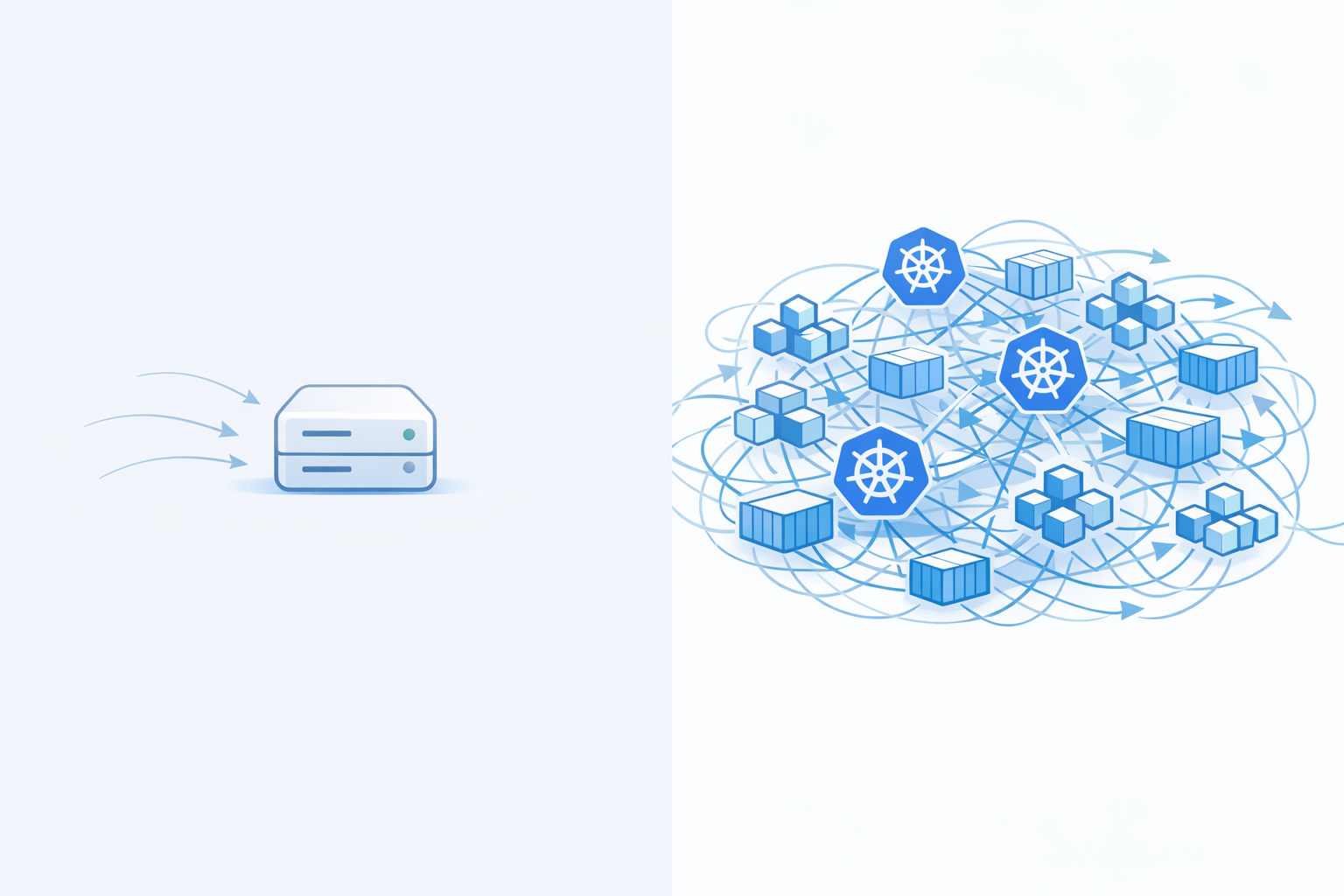

Vertical vs horizontal scaling

Scaling usually starts with two options:

- vertical scaling: bigger machine, more capacity;

- horizontal scaling: more machines, split the load.

They were still in the vertical phase, and it was working well.

Vertical scaling becomes problematic when instance costs become unreasonable, when single-host failure risk is no longer acceptable, or when hard limits are reached. They were not at that point.

So the right question was not "How do we operate a cluster?"

The right question was "Do we need more than one machine right now?"

The monolith constraint

Then came the uncomfortable part: architecture.

The system was a large monolith: ingestion, processing, API, and background jobs in one codebase with shared state and a shared database.

If you lift that into Kubernetes, what exactly are you scaling?

Everything. Every time. Even when only one area needs more resources.

Infrastructure can scale only what your design allows to scale independently. If everything is tightly coupled, everything grows together. Kubernetes cannot undo that coupling. It can only distribute the same coupling across more nodes.

Are you container-ready?

Another skipped question was operational readiness:

Can the app run cleanly in containers? Is most behavior stateless? Is persistent data externalized? Does startup stay fast and predictable? Does shutdown handle in-flight work gracefully?

If the answer is "not really", Kubernetes adds operational overhead before it adds architectural value.

You end up running the same heavy application in containers with extra orchestration complexity around it. In a small company without internal platform engineering depth, that usually means:

- more CI/CD complexity,

- more deployment logic,

- more observability surface to maintain,

- more on-call stress for the same product throughput.

All this for a bottleneck that may not even exist yet.

A calmer path that usually works

A better sequence is simple.

First, measure. Simulate realistic future load. Stress ingestion paths. Observe CPU, memory, queue latency, DB behavior, and p95/p99 response times. Identify real limits, not imagined ones.

Second, improve boundaries in the existing code. Separate ingestion concerns from processing concerns from API concerns. Even inside one deployable unit, clear boundaries reduce coupling and expose where independent scaling might later make sense.

Third, containerize for consistency, not fashion. Once packaging is stable, start with simpler orchestration choices such as ECS or Fargate.

For many teams, this already provides sufficient horizontal scaling with less platform overhead than running and operating a full Kubernetes cluster.

When Kubernetes truly makes sense

Kubernetes is excellent technology when context supports it:

- many teams deploy multiple services independently,

- workload types differ significantly,

- container-first workflows are already mature,

- deployment and rollback discipline is already strong.

In other words, when the organization is ready for that complexity tax and can convert it into delivery speed and resilience.

The real lesson

This is not an anti-Kubernetes story.

It is a reminder that infrastructure is not a substitute for architecture.

A tightly coupled system does not become loosely coupled because it runs on a cluster. A giant block of code remains a giant block of code, even if it is distributed across ten nodes.

Distributed problems are harder than local ones. Always.

If your team is unsure whether the next scaling step is architecture work, platform work, or both, I can help you map the actual bottlenecks first and choose the simplest path that keeps delivery fast.